The AMD Ryzen Threadripper 1950X and 1920X Review: CPUs on Steroids

by Ian Cutress on August 10, 2017 9:00 AM ESTAshes of the Singularity Escalation

Seen as the holy child of DirectX12, Ashes of the Singularity (AoTS, or just Ashes) has been the first title to actively go explore as many of DirectX12s features as it possibly can. Stardock, the developer behind the Nitrous engine which powers the game, has ensured that the real-time strategy title takes advantage of multiple cores and multiple graphics cards, in as many configurations as possible.

As a real-time strategy title, Ashes is all about responsiveness during both wide open shots but also concentrated battles. With DirectX12 at the helm, the ability to implement more draw calls per second allows the engine to work with substantial unit depth and effects that other RTS titles had to rely on combined draw calls to achieve, making some combined unit structures ultimately very rigid.

Stardock clearly understand the importance of an in-game benchmark, ensuring that such a tool was available and capable from day one, especially with all the additional DX12 features used and being able to characterize how they affected the title for the developer was important. The in-game benchmark performs a four minute fixed seed battle environment with a variety of shots, and outputs a vast amount of data to analyze.

For our benchmark, we run a fixed v2.11 version of the game due to some peculiarities of the splash screen added after the merger with the standalone Escalation expansion, and have an automated tool to call the benchmark on the command line. (Prior to v2.11, the benchmark also supported 8K/16K testing, however v2.11 has odd behavior which nukes this.)

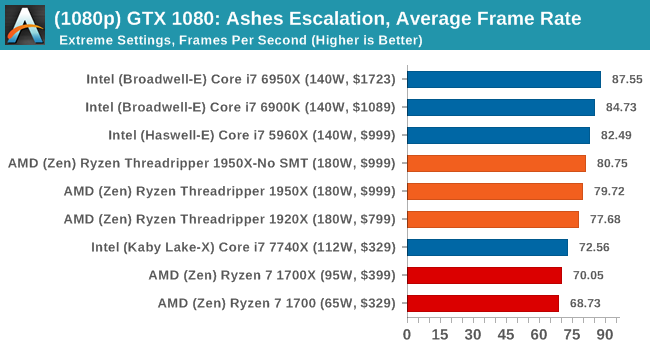

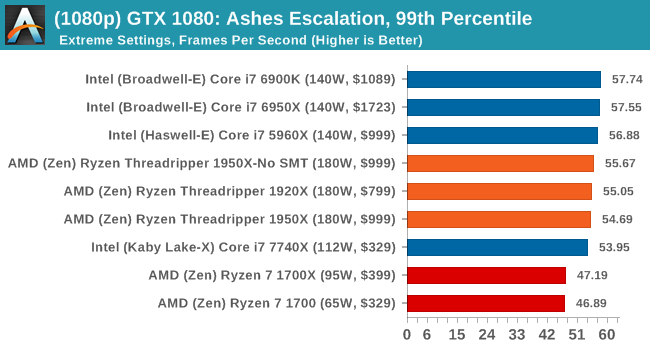

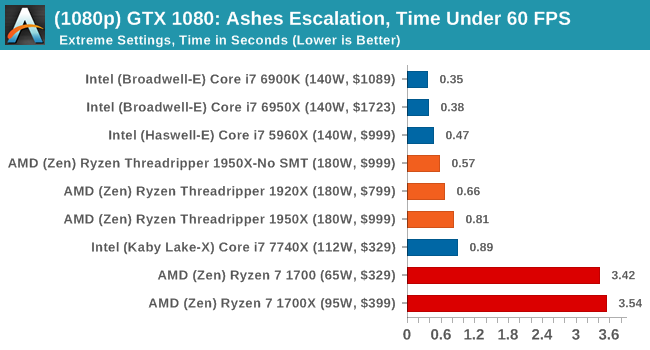

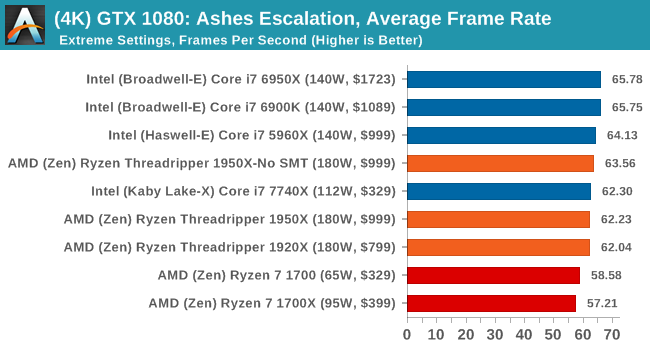

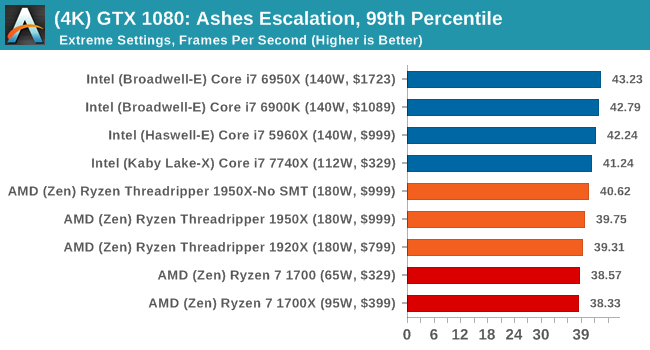

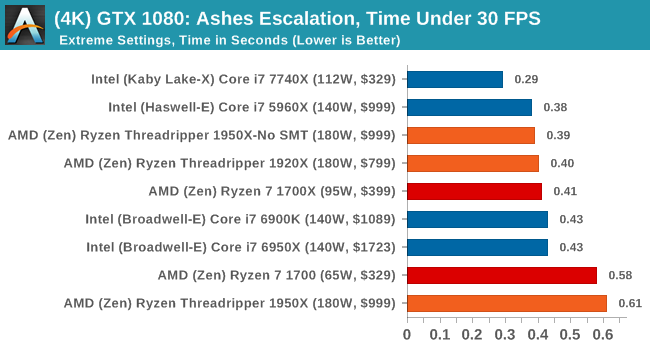

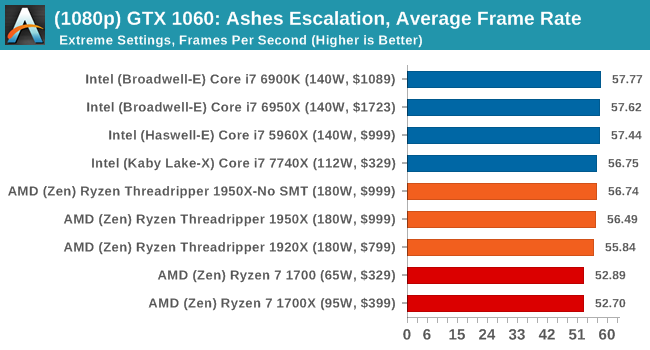

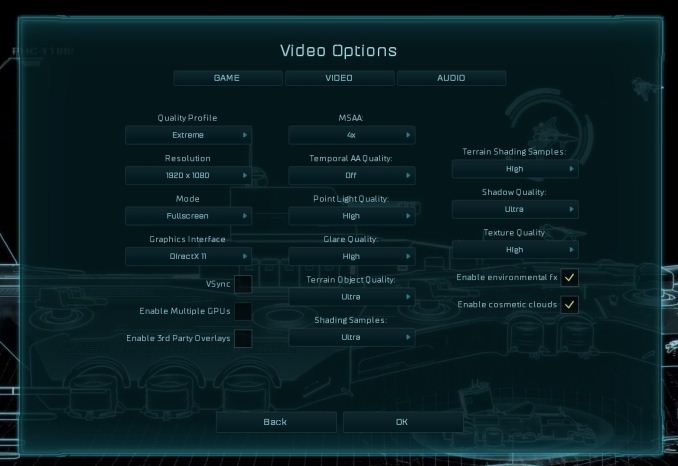

At both 1920x1080 and 4K resolutions, we run the same settings. Ashes has dropdown options for MSAA, Light Quality, Object Quality, Shading Samples, Shadow Quality, Textures, and separate options for the terrain. There are several presents, from Very Low to Extreme: we run our benchmarks at Extreme settings, and take the frame-time output for our average, percentile, and time under analysis.

All of our benchmark results can also be found in our benchmark engine, Bench.

MSI GTX 1080 Gaming 8G Performance

1080p

4K

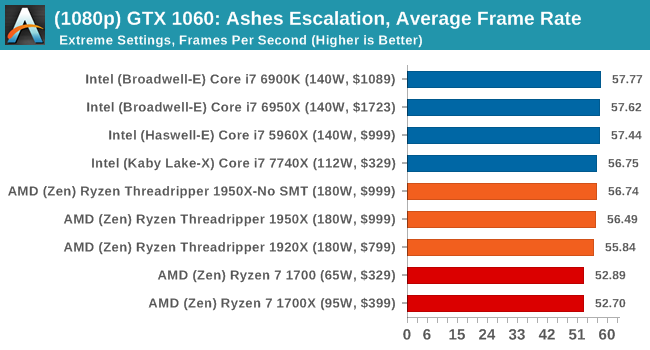

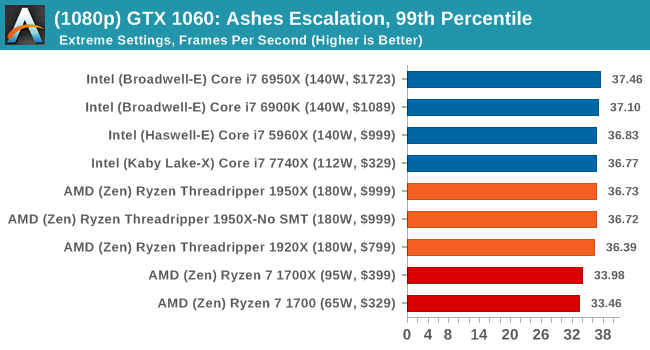

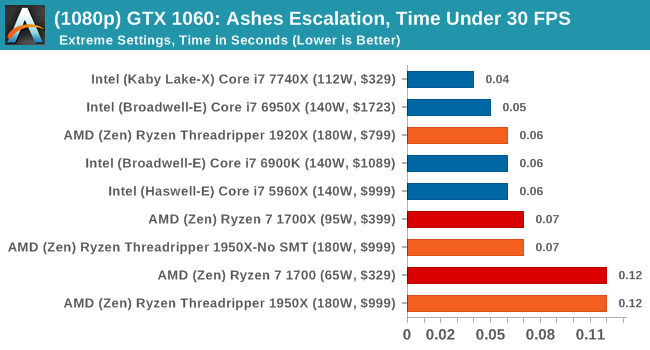

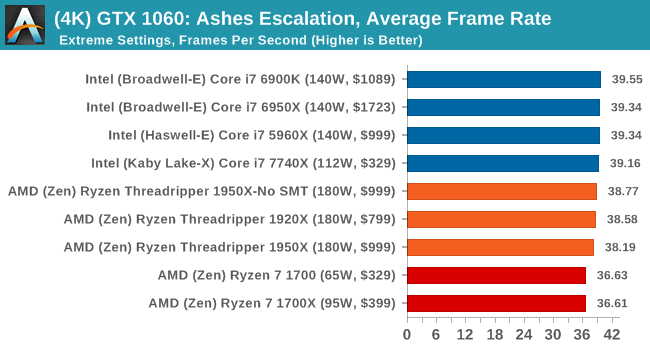

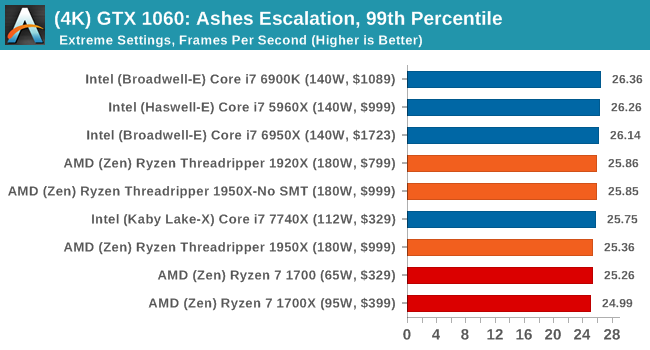

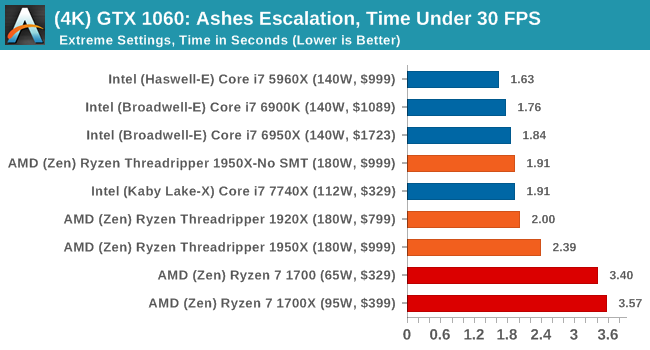

ASUS GTX 1060 Strix 6G Performance

1080p

4K

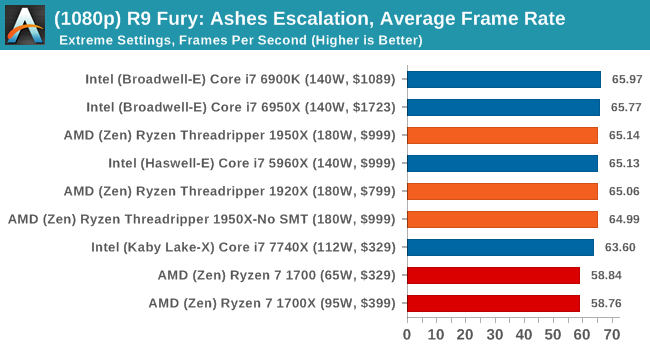

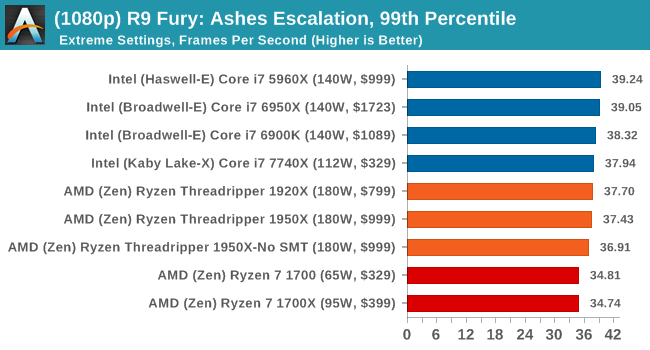

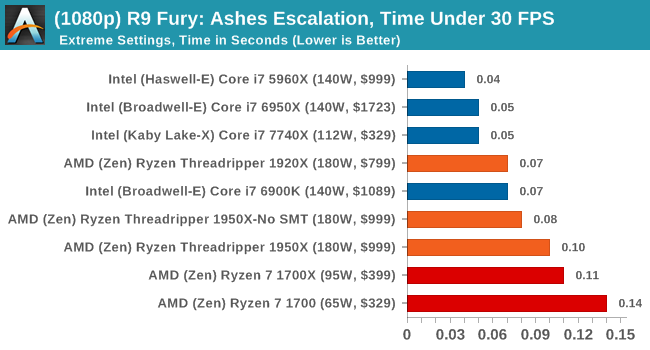

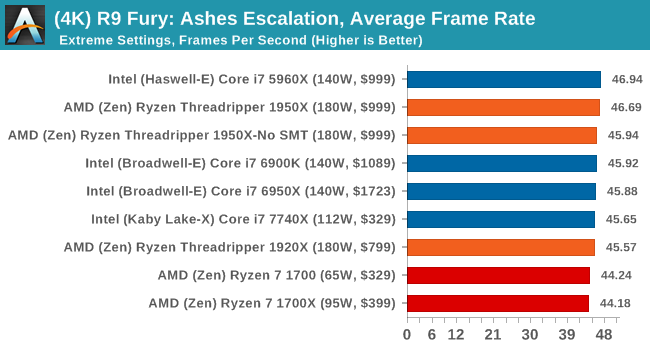

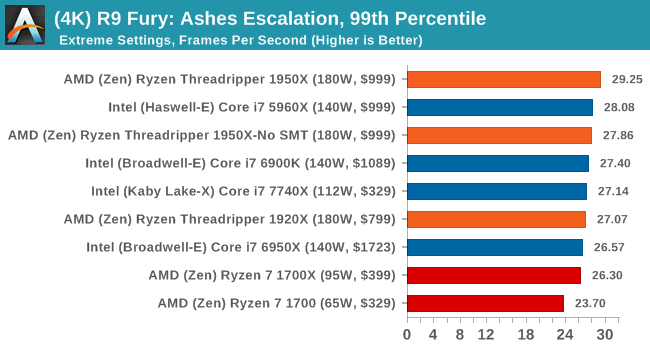

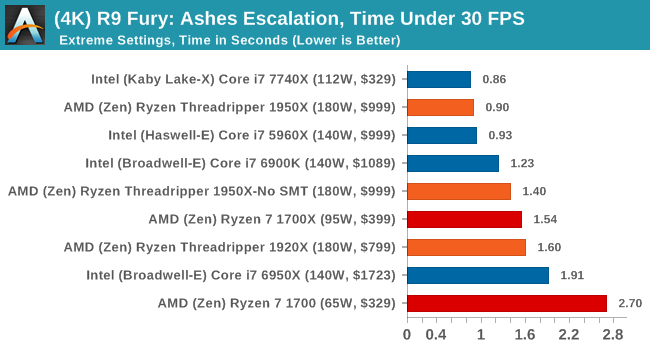

Sapphire Nitro R9 Fury 4G Performance

1080p

4K

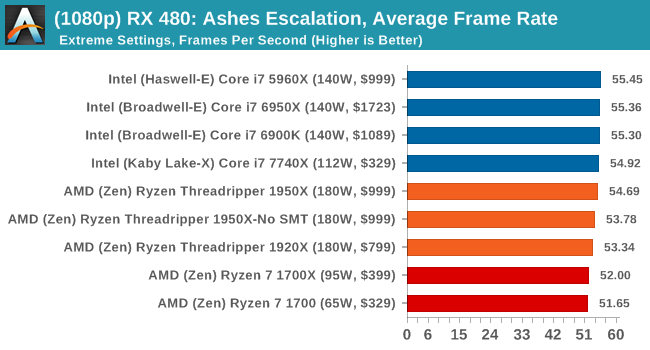

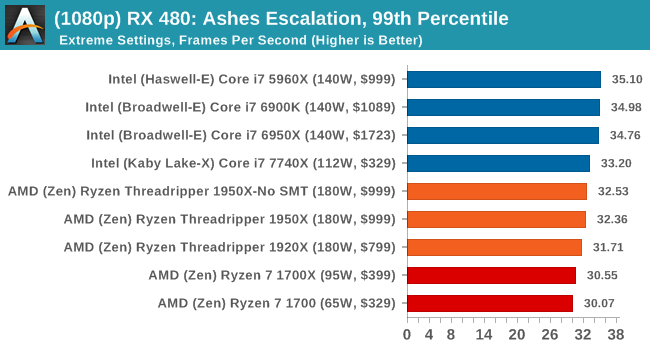

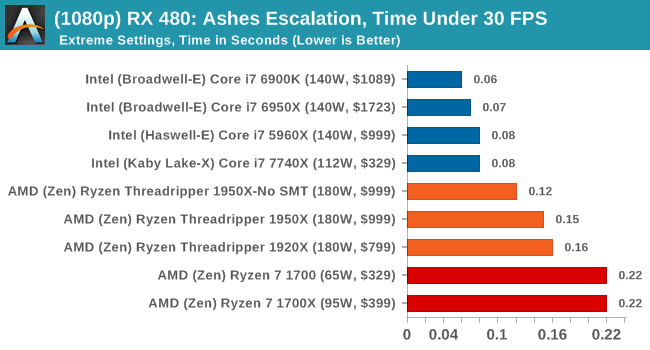

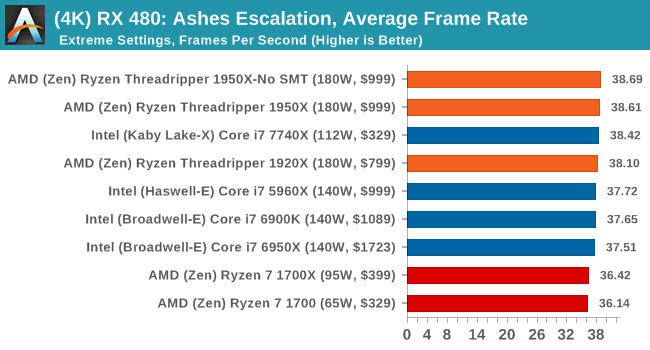

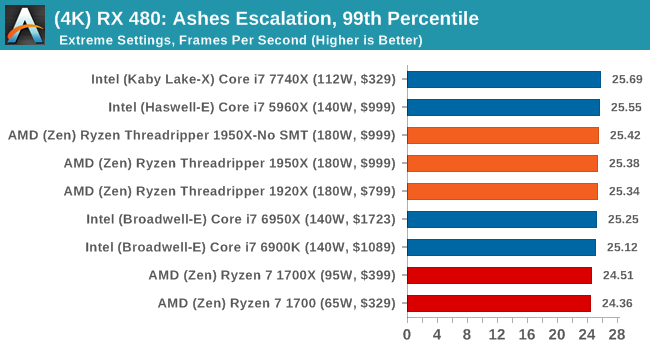

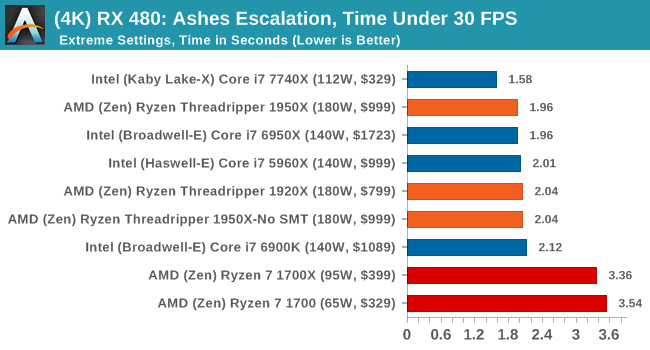

Sapphire Nitro RX 480 8G Performance

1080p

4K

AMD gets in the mix a lot with these tests, and in a number of cases pulls ahead of the Ryzen chips in the Time Under analysis.

347 Comments

View All Comments

mapesdhs - Friday, August 11, 2017 - link

And consoles are on the verge of moving to many-cores main CPUs. The inevitable dev change will spill over into PC gaming.RoboJ1M - Friday, August 11, 2017 - link

On the verge?All major consoles have had a greater core count than consumer CPUs, not to mention complex memory architectures, since, what, 2005?

One suspects the PC market has been benefiting from this for quite some time.

RoboJ1M - Friday, August 11, 2017 - link

Specifically, the 360 had 3 general purpose CPU coresAnd the PS3 had one general purpose CPU core and 7 short pipeline coprocessors that could only read and write to their caches. They had to be fed by the CPU core.

The 360 had unified program and graphics ram (still not common on PC!)

As well as it's large high speed cache.

The PS3 had septate program and video ram.

The Xbox one and PS4 were super boring pcs in boxes. But they did have 8 core CPUs. The x1x is interesting. It's got unified ram that runs at ludicrous speed. Sadly it will only be used for running games in 1800p to 2160p at 30 to 60 FPS :(

mlambert890 - Saturday, August 12, 2017 - link

Why do people constantly assume this is purely time/market economics?Not everything can *be* parallelized. Do people really not get that? It isn't just developers targeting a market. There are tasks that *can't be parallelized* because of the practical reality of dependencies. Executing ahead and out of order can only go so far before you have an inverse effect. Everyone could have 40 core CPUs... It doesn't mean that *gaming workloads* will be able to scale out that well.

The work that lends itself best to parallelization is the rendering pipeline and that's already entirely on the GPU (which is already massively parallel)

Magichands8 - Thursday, August 10, 2017 - link

I think what AMD did here though is fantastic. In my mind, creating a switch to change modes vastly adds to the value of the chip. I can now maximize performance based upon workload and software profile and that brings me closer to having the best of both worlds from one CPU.Notmyusualid - Sunday, August 13, 2017 - link

@ rtho782I agree it is a mess, and also, it is not AMDs fault.

I've have a 14c/28t Broadwell chip for over a year now, and I cannot launch Tomb Raider with HT on, nor GTA5. But most s/w is indifferent to the amount of cores presented to them, it would seem to me.

BrokenCrayons - Thursday, August 10, 2017 - link

Great review but the word "traditional" is used heavily. Given the short lifespan of computer parts and the nature of consumer electronics, I'd suggest that there isn't enough time or emotional attachment to establish a tradition of any sort. Motherboards sockets and market segments, for instance, might be better described in other ways unless it's becoming traditional in the review business to call older product designs traditional. :)mkozakewich - Monday, August 14, 2017 - link

Oh man, but we'll still gnash our teeth at our broken tech traditions!lefty2 - Thursday, August 10, 2017 - link

It's pretty useless measuring power alone. You need to measure efficiency (performance /watt).So yeah, a 16 core CPU draws more power than a 10 core, but it also probably doing a lot more work.

Diji1 - Thursday, August 10, 2017 - link

Er why don't you just do it yourself, they've already given you the numbers.