The AMD Ryzen Threadripper 1950X and 1920X Review: CPUs on Steroids

by Ian Cutress on August 10, 2017 9:00 AM ESTGrand Theft Auto

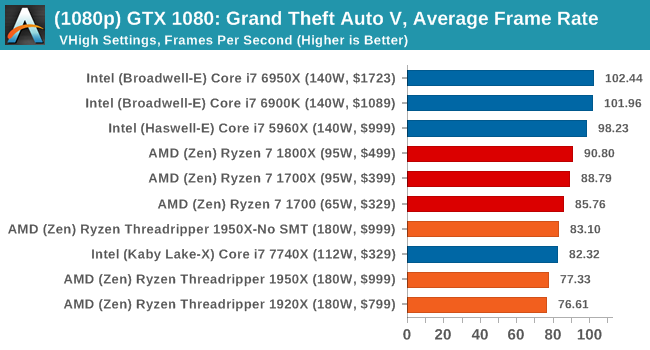

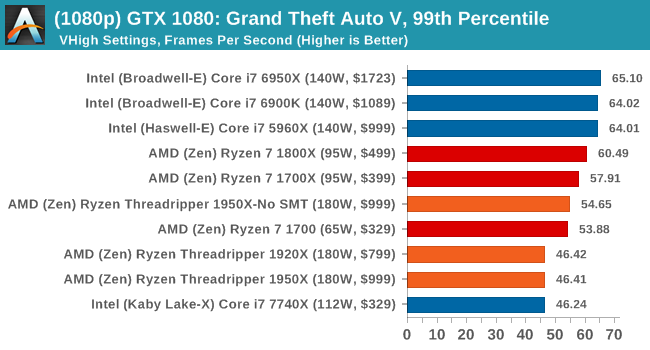

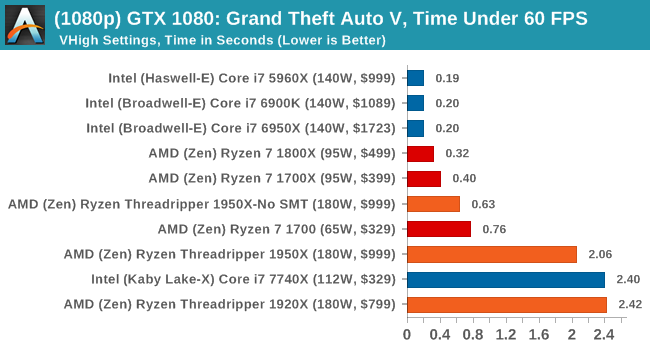

The highly anticipated iteration of the Grand Theft Auto franchise hit the shelves on April 14th 2015, with both AMD and NVIDIA in tow to help optimize the title. GTA doesn’t provide graphical presets, but opens up the options to users and extends the boundaries by pushing even the hardest systems to the limit using Rockstar’s Advanced Game Engine under DirectX 11. Whether the user is flying high in the mountains with long draw distances or dealing with assorted trash in the city, when cranked up to maximum it creates stunning visuals but hard work for both the CPU and the GPU.

For our test we have scripted a version of the in-game benchmark. The in-game benchmark consists of five scenarios: four short panning shots with varying lighting and weather effects, and a fifth action sequence that lasts around 90 seconds. We use only the final part of the benchmark, which combines a flight scene in a jet followed by an inner city drive-by through several intersections followed by ramming a tanker that explodes, causing other cars to explode as well. This is a mix of distance rendering followed by a detailed near-rendering action sequence, and the title thankfully spits out frame time data.

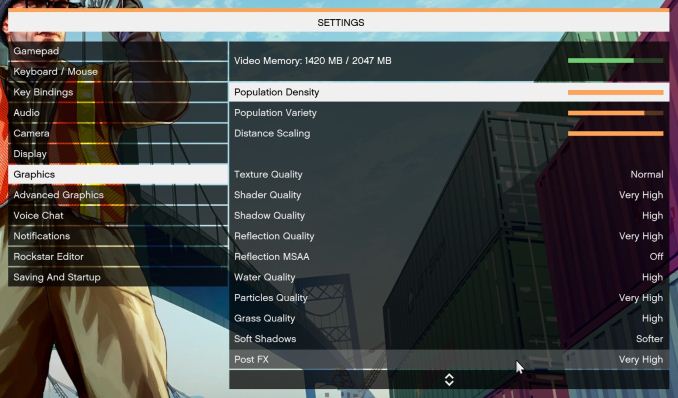

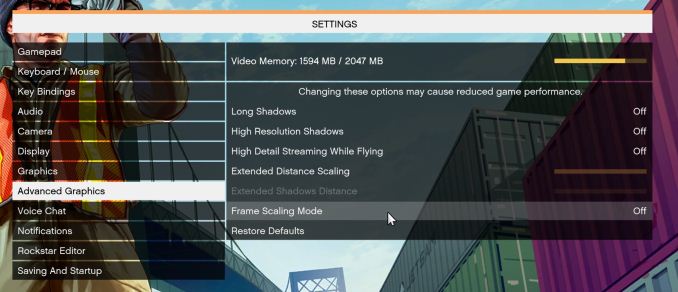

There are no presets for the graphics options on GTA, allowing the user to adjust options such as population density and distance scaling on sliders, but others such as texture/shadow/shader/water quality from Low to Very High. Other options include MSAA, soft shadows, post effects, shadow resolution and extended draw distance options. There is a handy option at the top which shows how much video memory the options are expected to consume, with obvious repercussions if a user requests more video memory than is present on the card (although there’s no obvious indication if you have a low-end GPU with lots of GPU memory, like an R7 240 4GB).

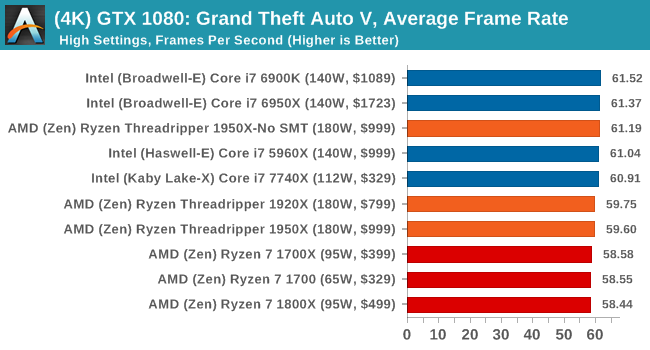

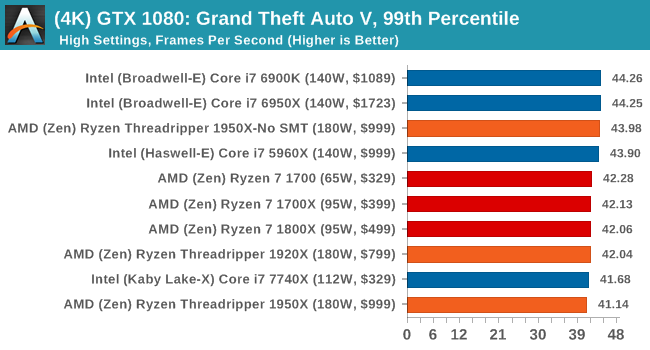

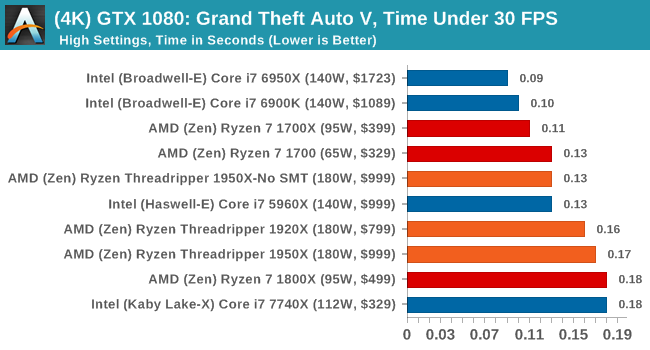

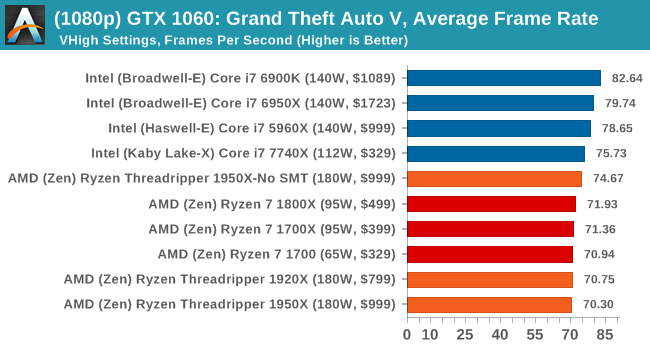

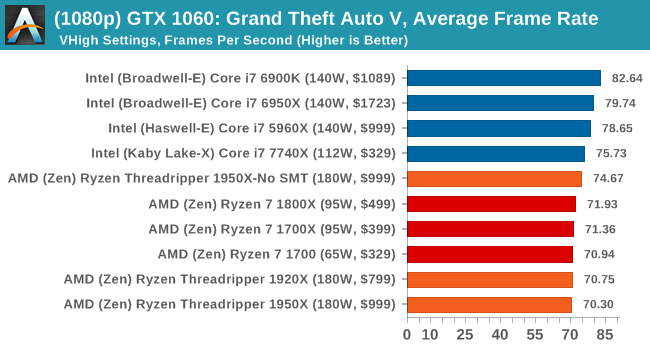

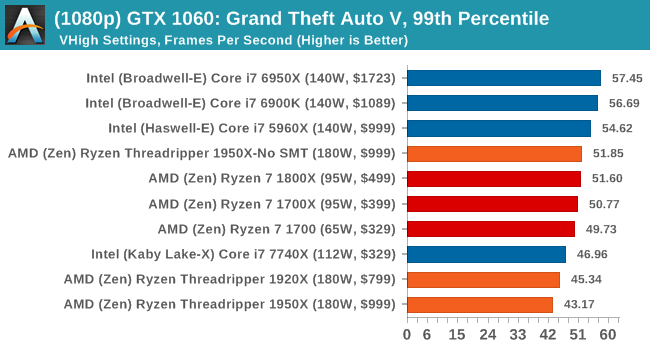

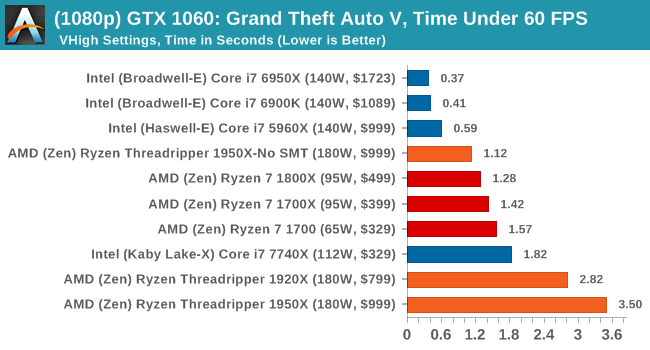

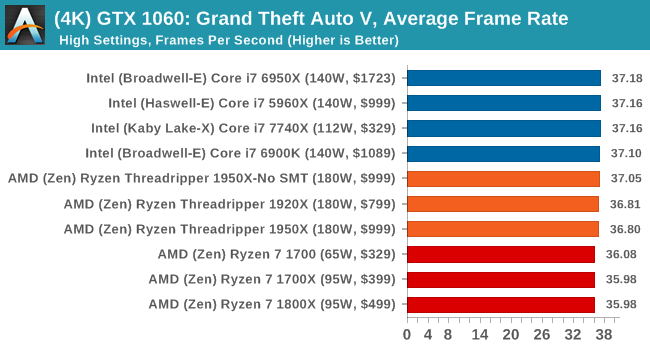

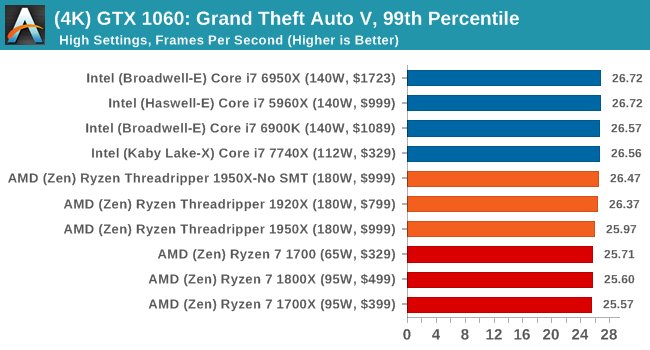

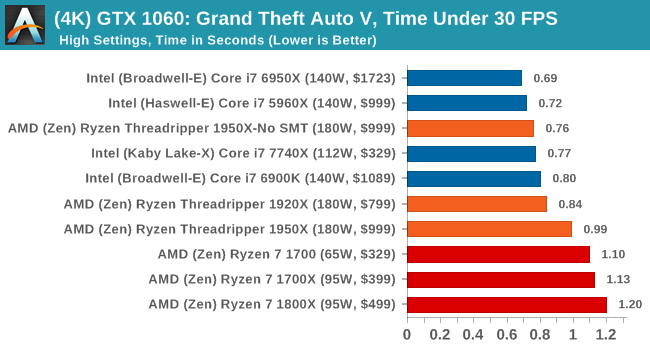

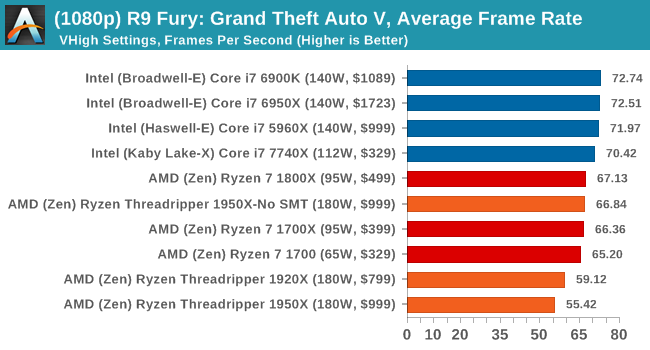

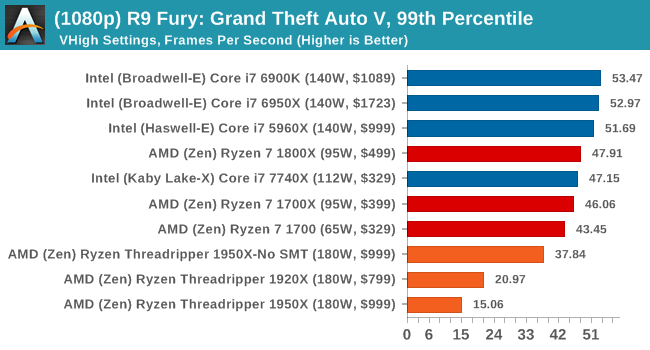

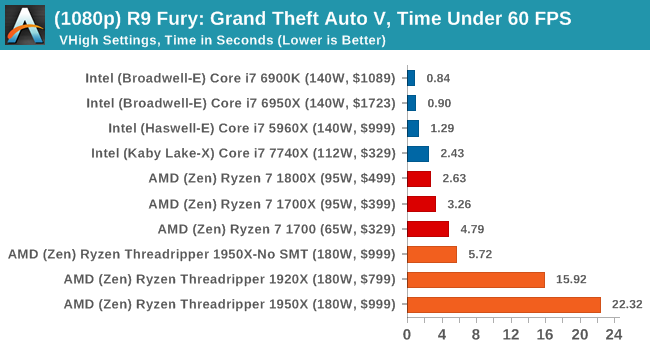

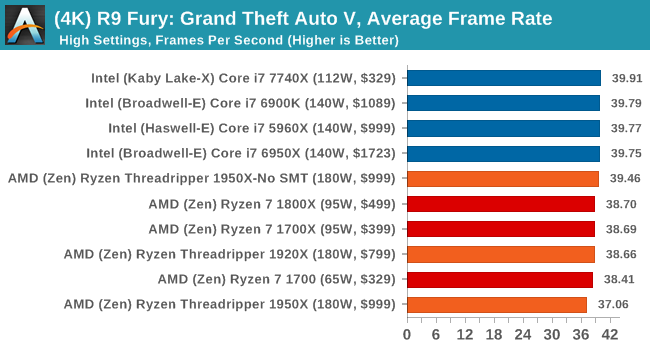

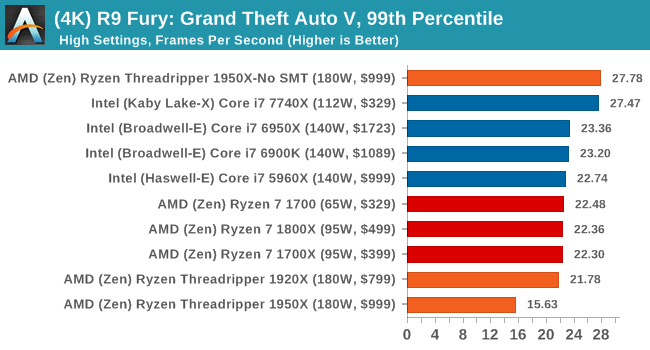

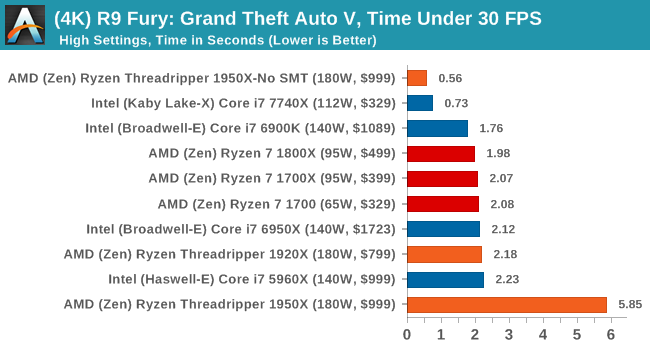

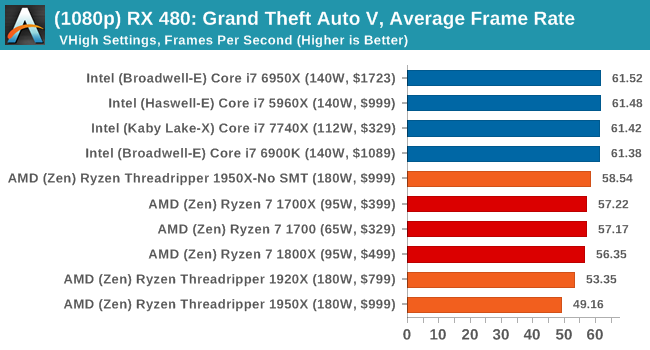

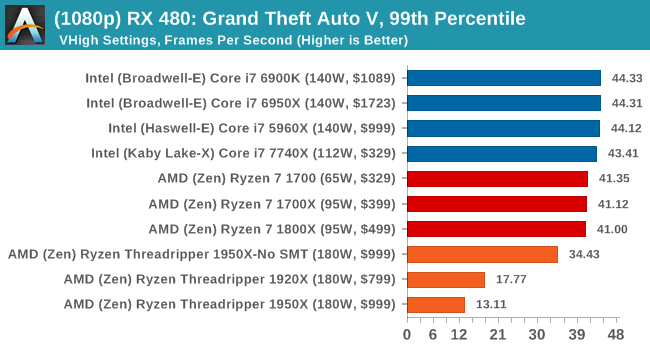

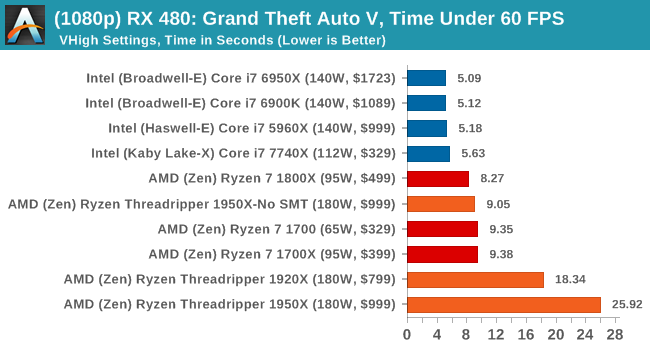

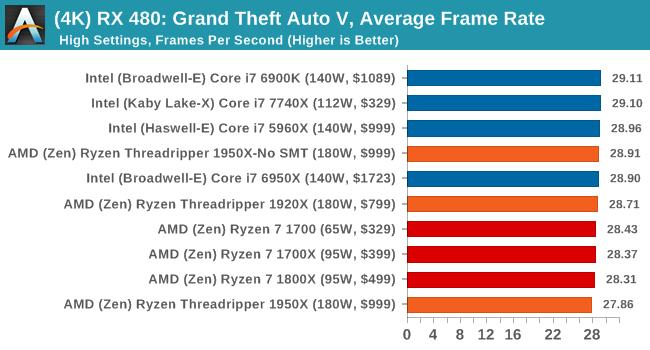

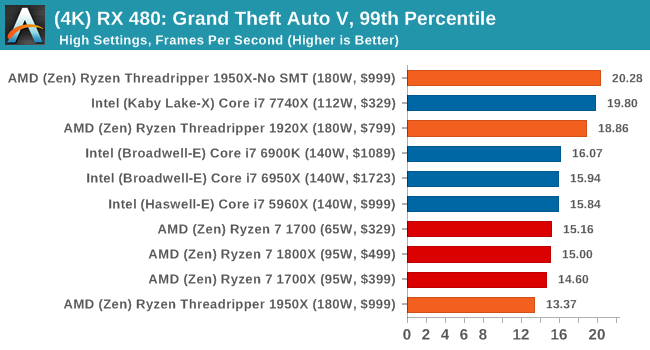

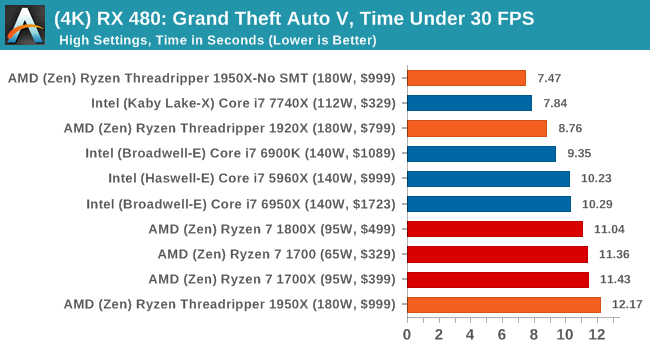

To that end, we run the benchmark at 1920x1080 using an average of Very High on the settings, and also at 4K using High on most of them. We take the average results of four runs, reporting frame rate averages, 99th percentiles, and our time under analysis.

All of our benchmark results can also be found in our benchmark engine, Bench.

MSI GTX 1080 Gaming 8G Performance

1080p

4K

ASUS GTX 1060 Strix 6G Performance

1080p

4K

Sapphire Nitro R9 Fury 4G Performance

1080p

4K

Sapphire Nitro RX 480 8G Performance

1080p

4K

Depending on the CPU, for the most part Threadripper performs near to Ryzen or just below it.

347 Comments

View All Comments

bigboxes - Friday, August 11, 2017 - link

You're acting just like the fanboi trolls you claim to loathe.Alexvrb - Sunday, August 13, 2017 - link

Yeah that was definitely a pot<->kettle comment. LOL.trivor - Saturday, August 12, 2017 - link

For those of you considering this CPU the fact is you are going to get MUCH better value by choosing one of the Ryzen CPUs - Ryzen 7 1800X is now at around $420 for 8/16 and the 7 1700 (8/16 again) has been on sale for as little as $299. Now, if you need the high thread counts for work on things like content creation and you still want to be able to run games it will be competitive (read: not the king of the hill) when you are running your games. So, if you do more than 50% of your computing time is gaming then go for an Intel CPU OR one of the Ryzen 5/7 consumer CPUs.Lord of the Bored - Friday, August 11, 2017 - link

Which would explain why the introduction doesn't mention the Netburst fiasco by name."The company that could force the most cycles through a processor could get a base performance advantage over the other, and it led to some rather hot chips, with the certain architectures being dropped for something that scaled better. " is, to my eye, actually attention-grabbing in the way it avoids using any names like Preshott, I mean Prescott and only obliquely references the 1GHz Athlon, the Thunderbirds, Sledgehammer, and the whole Netburst fiasco that destroyed the once-respected Pentium name.

But no, let's just say that "certain architectures" were dropped and there were "some rather hot chips" and keep Intel happy. They need that bone right now, though not as much as they did during the reign of Thunderbird and the 'hammers.

Hurr Durr - Friday, August 11, 2017 - link

If the unword "NetBurst" triggers you so much, it`s not processors you should spend money on, but shrinks.Lord of the Bored - Friday, August 11, 2017 - link

Hey, we were an Athlon house. I didn't suffer through the series of mis-steps that plagued Intel. I just thought the sentence was conspicuous in how hard it tried to not name names.mlambert890 - Saturday, August 12, 2017 - link

"name names"? There are 2 companies that make CPUs. Everyone knows Netburst was Intel P4 era. It's not Watergate ok?Conspiracy obsession has become a legitimate mental illness.

fallaha56 - Thursday, August 10, 2017 - link

handy not to show the new Intel chip struggle eh?Breit - Friday, August 11, 2017 - link

Is it possibly to bench the Intel CPUs (especially the i9-7900x) for those latency/single-thread tests with Hyperthreading turned off? This would probably give a better comparison to AMDs Game Mode and hopefully higher numbers too due to double the cache/registers available to one thread.cheshirster - Friday, August 11, 2017 - link

Skylake-X sucks at gaming.7800X is slower than 1600X.