The Intel Skylake-X Review: Core i9 7900X, i7 7820X and i7 7800X Tested

by Ian Cutress on June 19, 2017 9:01 AM ESTI Keep My Cache Private

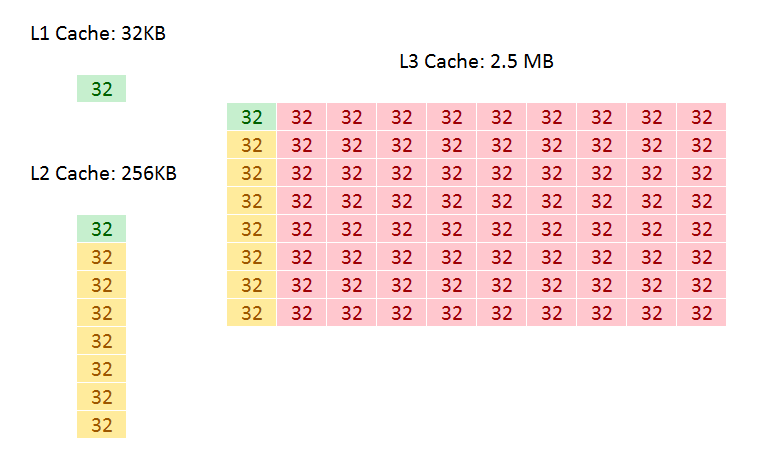

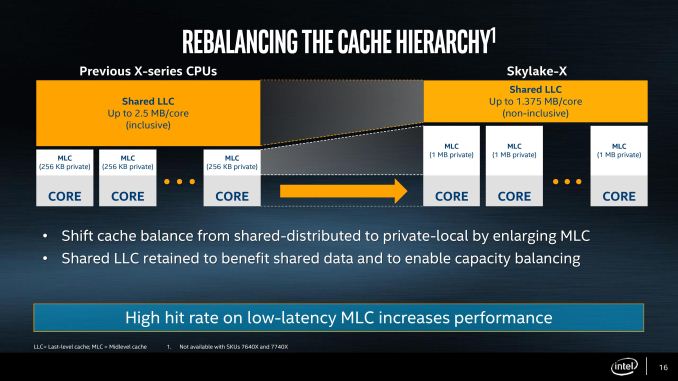

As mentioned in the original Skylake-X announcements, the new Skylake-SP cores have shaken up the cache hierarchy compared to previous generations. What used to be simple inclusive caches have now been adjusted in size, policy, latency, and efficiency, which will have a direct impact on performance. It also means that Skylake-S and Skylake-SP will have different instruction throughput efficiency levels. They could be the difference between chalk and cheese and a result, or the difference between stilton and aged stilton.

Let us start with a direct compare of Skylake-S and Skylake-SP.

| Comparison: Skylake-S and Skylake-SP Caches | ||

| Skylake-S | Features | Skylake-SP |

| 32 KB 8-way 4-cycle 4KB 64-entry 4-way TLB |

L1-D | 32 KB 8-way 4-cycle 4KB 64-entry 4-way TLB |

| 32 KB 8-way 4KB 128-entry 8-way TLB |

L1-I | 32 KB 8-way 4KB 128-entry 8-way TLB |

| 256 KB 4-way 11-cycle 4KB 1536-entry 12-way TLB Inclusive |

L2 | 1 MB 16-way 11-13 cycle 4KB 1536-entry 12-way TLB Inclusive |

| < 2 MB/core Up to 16-way 44-cycle Inclusive |

L3 | 1.375 MB/core 11-way 77-cycle Non-inclusive |

The new core keeps the same L1D and L1I cache structures, both implementing writeback 32KB 8-way caches for each. These caches have a 4-cycle access latency, but differ in their access support: Skylake-S does 2x32-byte loads and 1x32-byte store per cycle, whereas Skylake-SP offers double on both.

The big changes are with the L2 and the L3. Skylake-SP has a 1MB private L2 cache with 16-way associativity, compared to the 256KB private L2 cache with 4-way associativity in Skylake-S. The L3 changes to an 11-way non-inclusive 1.375MB/core, from a 20-way fully-inclusive 2.5MB/core arrangement.

That’s a lot to unpack, so let’s start with inclusivity:

An inclusive cache contains everything in the cache underneath it and has to be at least the same size as the cache underneath (and usually a lot bigger), compared to an exclusive cache which has none of the data in the cache underneath it. The benefit of an inclusive cache means that if a line in the lower cache is removed due it being old for other data, there should still be a copy in the cache above it which can be called upon. The downside is that the cache above it has to be huge – with Skylake-S we have a 256KB L2 and a 2.5MB/core L3, meaning that the L2 data could be replaced 10 times before a line is evicted from the L3.

A non-inclusive cache is somewhat between the two, and is different to an exclusive cache: in this context, when a data line is present in the L2, it does not immediately go into L3. If the value in L2 is modified or evicted, the data then moves into L3, storing an older copy. (The reason it is not called an exclusive cache is because the data can be re-read from L3 to L2 and still remain in the L3). This is what we usually call a victim cache, depending on if the core can prefetch data into L2 only or L2 and L3 as required. In this case, we believe the SKL-SP core cannot prefetch into L3, making the L3 a victim cache similar to what we see on Zen, or Intel’s first eDRAM parts on Broadwell. Victim caches usually have limited roles, especially when they are similar in size to the cache below it (if a line is evicted from a large L2, what are the chances you’ll need it again so soon), but some workloads that require a large reuse of recent data that spills out of L2 will see some benefit.

So why move to a victim cache on the L3? Intel’s goal here was the larger private L2. By moving from 256KB to 1MB, that’s a double double increase. A general rule of thumb is that a doubling of the cache increases the hit rate by 41% (square root of 2), which can be the equivalent to a 3-5% IPC uplift. By doing a double double (as well as doing the double double on the associativity), Intel is effectively halving the L2 miss rate with the same prefetch rules. Normally this benefits any L2 size sensitive workloads, which some enterprise environments such as databases can be L2 size sensitive (and we fully suspect that a larger L2 came at the request of the cloud providers).

Moving to a larger cache typically increases latency. Intel is stating that the L2 latency has increased, from 11 cycles to ~13, depending on the type of access – the fastest load-to-use is expected to be 13 cycles. Adjusting the latency of the L2 cache is going to have a knock-on effect given that codes that are not L2 size sensitive might still be affected.

So if the L2 is larger and has a higher latency, does that mean the smaller L3 is lower latency? Unfortunately not, given the size of the L2 and a number of other factors – with the L3 being a victim cache, it is typically used less frequency so Intel can give the L3 less stringent requirements to remain stable. In this case the latency has increased from 44 in SKL-X to 77 in SKL-SP. That’s a sizeable difference, but again, given the utility of the victim cache it might make little difference to most software.

Moving the L3 to a non-inclusive cache will also have repercussions for some of Intel’s enterprise features. Back at the Broadwell-EP Xeon launch, one of the features provided was L3 cache partitioning, allowing limited size virtual machines to hog most of the L3 cache if it was running a mission-critical workflow. Because the L3 cache was more important, this was a good feature to add. Intel won’t say how this feature has evolved with the Skylake-SP core at this time, as we will probably have to wait until that launch to find out.

As a side note, it is worth noting here that Broadwell-E was a 256KB private L2 but 8-way, compared to Skylake-S which was a 256KB private L2 but 4-way. Intel stated that the Skylake-S base core went down in associativity for several reasons, but the main one was to make the design more modular. In this case it means the L2 in both size and associativity are 4x from Skylake-S by design, and shows that there may be 512KB 8-way variants in the future.

264 Comments

View All Comments

Soheil - Sunday, June 25, 2017 - link

Anyone knows why 1600X better than 1800X?OddFriendship8989 - Thursday, June 29, 2017 - link

I'm late here as usual but why are you not comparing against the 7700k and 7600k? I get that these are HEDT chips, but it's worth comparing against the high end mainstream especially when the 7800x and 7700k are priced similarly that someone MIGHT consider jumping over.I hate to say it but this is the typical stuff you guys used to do, and I know it takes more time to put together more CPUs, but logical comparisons MUST be made and these charts show a bit of laziness.

ashlol - Friday, June 30, 2017 - link

can we have the GPU tests pleaseOxford Guy - Saturday, July 1, 2017 - link

"The discussion on whether Intel should be offering a standard goopy TIM or the indium-tin solder that they used to (and AMD uses) is one I’ve run on AnandTech before, but there’s a really good guide from Roman Hartung, who overclocks by the name der8auer. I’m trying to get him to agree to post it on AnandTech with SKL-X updates so we can discuss it here, but it really is some nice research. You can find the guide over at http://overclocking.guide."If you have a point to make then make it. After all, you said you've already "run" this discussion before. Tell us why polymer TIM is a better choice than solder (preferably without citing cracks from liquid nitrogen cooling).

ashlol - Monday, July 3, 2017 - link

Anyway both are bad since you have to delid it to achieve good cooling. I have delidded a 4770k and a 6700k and put liquid metal TIM between the die and the IHS and they both run 15°C cooler at 4.6-4.7GHz@60°C with custom loop. And from seeing the temperature under overclock I will have to delid those skylake-x too.parlinone - Tuesday, July 4, 2017 - link

What I find most shocking is a $329 Ryzen 1700 outperforms a $389 7800X at Cinebench...for less than half the power.The performance to power ratio translates to 239% in AMD's advantage. That's unprecedented, and I never imagined to see that day.

dwade123 - Thursday, July 6, 2017 - link

Only in Cinebench and AES is where Ryzen look good. 7800x beats the 1800x in everything else in this review. Ryzen is too inconsistent in both productivity and gaming. It is priced accordingly to that and not out of good faith from AMD. This is also the reason why Coffee Lake will only top out at 6 cores. because it can consistently beat the best Ryzen model.IGTrading - Friday, July 14, 2017 - link

I absolutely disagree with the conclusion. The correct conclusion can only drawn when comparin apples to apples. Oh, if you want to be objective and compare apples to oranges, you can't just take into considerantion today's benchmark results and price. Have we forgotten about the days we REVIEWERS were complaining about the high power consumption of Pentium 4 and Pentium D ?! What about the FX 8350 ?! Is power consumption not an objective metric anymore?! What about platform price ?! What about price/performance?! Why do some people get suddenly blinded by marketing money?! Conclusion: i-7900X is the highest performance the home power user can get today if money for the CPU , mobo and subsequent power consumption are not an issue. Comparing apples to apples or core for core, the i7820X clearly shows Intel's anxiety with Zen. The i7820X consumes 40% more than the AMD 1800X and costs 20% more while its motherboard is 200% the price. So paying all these heaps of money, CORE for CORE the Intel 7820X is a bit faster in some benchmarks, as it should be considering the power consumption and price you pay, EQUAL in a few benchmarks and SLOWER in a few other benchmarks. Would you pay the serious extra money for this ?! And put up with the 40% higher power consumption and heat generation ?! Come ooooon ...azulon1 - Sunday, July 16, 2017 - link

Wow how exactly is this fair that Intel gets a pass for gaming, because there were problems with the problem with the platform. If I remember Rison also had a problem with gaming. But it didn’t stop you guys then did it. don’t group me into AMD fanboy, But why such a bais?Soheil - Saturday, July 29, 2017 - link

no one answer to me? why 1600X better than 1700 and 1700X and some time better than 1800X?what about 1600? is good like as 1600X for gaming or not?