Apple To Develop Own GPU, Drop Imagination's GPUs From SoCs

by Ryan Smith on April 3, 2017 6:30 AM EST

We typically don’t write about what hardware vendors aren’t going to be doing, but then most things hardware vendors don’t do are internal and never make it to the public eye. However when those things do make it to the public eye, then they are often a big deal, and today’s press release from Imagination is especially so.

In a bombshell of a press release issued this morning, Imagination has announced that Apple has informed their long-time GPU partner that they will be winding down their use of Imagination’s IP. Specifically, Apple expects that they will no longer be using Imagination’s IP for new products in 15 to 24 months. Furthermore the GPU design that replaces Imagination’s designs will be, according to Imagination, “a separate, independent graphics design.” In other words, Apple is developing their own GPU, and when that is ready, they will be dropping Imagination’s GPU designs entirely.

This alone would be big news, however the story doesn’t stop there. As Apple’s long-time GPU partner and the provider for the basis of all of Apple’s SoCs going back to the very first iPhone, Imagination is also making a case to investors (and the public) that while Apple may be dropping Imagination’s GPU designs for a custom design, that Apple can’t develop a new GPU in isolation – that any GPU developed by the company would still infringe on some of Imagination’s IP. As a result the company is continuing to sit down with Apple and discuss alternative licensing arrangements, with the intent of defending their IP rights. Put another way, while any Apple-developed GPU will contain a whole lot less of Imagination’s IP than the current designs, Imagination believes that they will still have elements based on Imagination’s IP, and as a result Apple would need to make lesser royalty payments to Imagination for devices using the new GPU.

An Apple-Developed GPU?

From a consumer/enthusiast perspective, the big change here is of course that Apple is going their own way in developing GPUs. It’s no secret that the company has been stocking up on GPU engineers, and from a cost perspective money may as well be no object for the most valuable company in the world. However this is the first confirmation that Apple has been putting their significant resources towards the development of a new GPU. Previous to this, what little we knew of Apple’s development process was that they were taking a sort of hybrid approach in GPU development, designing GPUs based on Imagination’s core architecture, but increasingly divergent/customized from Imagination’s own designs. The resulting GPUs weren’t just stock Imagination designs – and this is why we’ve stopped naming them as such – but to the best of our knowledge, they also weren’t new designs built from the ground up.

What’s interesting about this, besides confirming something I’ve long suspected (what else are you going to do with that many GPU engineers?), is that Apple’s trajectory on the GPU side very closely follows their trajectory on the CPU side. In the case of Apple’s CPUs, they first used more-or-less stock ARM CPU cores, started tweaking the layout with the A-series SoCs, began developing their own CPU core with Swift (A6), and then dropped the hammer with Cyclone (A7). On the GPU side the path is much the same; after tweaking Imagination’s designs, Apple is now to the Swift portion of the program, developing their own GPU.

What this could amount to for Apple and their products could be immense, or it could be little more than a footnote in the history of Apple’s SoC designs. Will Apple develop a conventional GPU design? Will they try for something more radical? Will they build bigger discrete GPUs for their Mac products? On all of this, only time will tell.

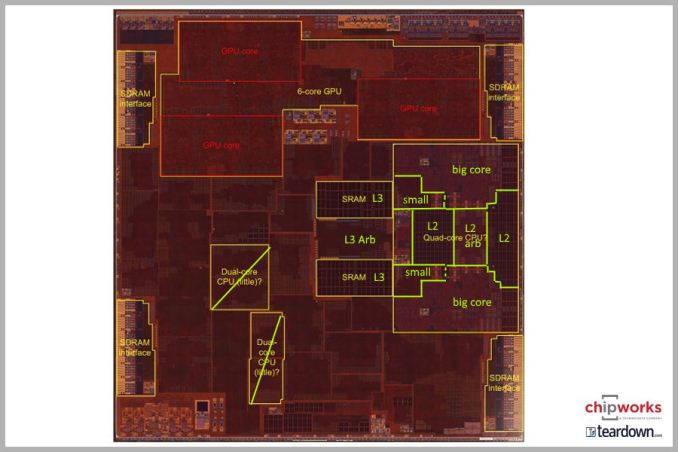

Apple A10 SoC Die Shot (Courtesy TechInsights)

However, and these are words I may end up eating in 2018/2019, I would be very surprised if an Apple-developed GPU has the same market-shattering impact that their Cyclone CPU did. In the GPU space some designs are stronger than others, but there is A) no “common” GPU design like there was with ARM Cortex CPUs, and B) there isn’t an immediate and obvious problem with current GPUs that needs to be solved. What spurred the development of Cyclone and other Apple high-performance CPUs was that no one was making what Apple really wanted: an Intel Core-like CPU design for SoCs. Apple needed something bigger and more powerful than anyone else could offer, and they wanted to go in a direction that ARM was not by pursuing deep out-of-order execution and a wide issue width.

On the GPU side, however, GPUs are far more scalable. If Apple needs a more powerful GPU, Imagination’s IP can scale from a single cluster up to 16, and the forthcoming Furian can go even higher. And to be clear, unlike CPUs, adding more cores/clusters does help across the board, which is why NVIDIA is able to put the Pascal architecture in everything from a 250-watt card to an SoC. So whatever is driving Apple’s decision, it’s not just about raw performance.

What is still left on the table is efficiency – both area and power – and cost. Apple may be going this route because they believe they can develop a more efficient GPU internally than they can following Imagination’s GPU architectures, which would be interesting to see as, to date, Imagination’s Rogue designs have done very well inside of Apple’s SoCs. Alternatively, Apple may just be tired of paying Imagination $75M+ a year in royalties, and wants to bring that spending in-house. But no matter what, all eyes will be on how Apple promotes their GPUs and their performance later this year.

Speaking of which, the timetable Imagination offers is quite interesting. According to Imaginations press release, they have told the company that they will no longer be using Imagination’s IP for new products in 15 to 24 months. As Imagination is an IP company, this is a critical distinction: this doesn’t mean that Apple is going to launch their new GPU in 15 to 24 months, it’s that they’re going to be done rolling out new products using Imagination’s IP altogether within the next 2 years.

| Apple SoC History | ||||

| First Product | Discontinued | |||

| A7 | iPhone 5s (2013) |

iPad Mini 2 (2017) |

||

| A8 | iPhone 6 (2014) |

Still In Use: iPad Mini 4, iPod Touch |

||

| A9 | iPhone 6s (2015) |

Still In Use: iPad, iPhone SE |

||

| A10 | iPhone 7 (2016) |

Still In Use | ||

And that, in turn, means that Apple’s new GPU could be launching sooner rather than later. I hesitate to read too much into this because there are so many other variables at play, but the obvious question is what this means for the the (presumed) A11 SoC in this fall’s iPhone. Apple has tended to sell most of their SoCs for a few years – trickling down from iPhone and high-end iPad to their entry-level equivalents – so it could be that Apple needs to launch their new GPU in A11 in order to have it trickle-down to lower-end products inside that 15 to 24 month window. On the other hand, Apple could go with Imagination in A11, and then just avoid doing trickle-down, using new SoC designs for entry-level devices instead. The only thing that’s safe to say right now is that with this revelation, an Imagination GPU design is no longer a lock on A11 – anything is going to be possible.

But no matter what, this does make it very clear that Apple has passed on Imagination’s next-generation Furian GPU architecture. Furian won’t be ready in time for A11, and anything after that is guaranteed to be part of Apple’s GPU transition. So Rogue will be the final Imagination GPU architecture that Apple uses.

144 Comments

View All Comments

renz496 - Monday, April 3, 2017 - link

that's only for old patent right? but if Apple need to make competitive modern GPU and supporting all the latest feature that still going to touch much more recent Imagination IP.trane - Monday, April 3, 2017 - link

> Alternatively, Apple may just be tired of paying Imagination $75M+ a yearYeah, that's all there is to it.

Even with CPUs, they could easily have paid companies like Qualcomm or Nvidia to develop a custom wide CPU for them. Heck, isn't that what Denver is anyway? The first Denver was comfortably beating Apple A8 at the time. Too bad there's no demand for Tegras anymore, Denver v2 might have been good competition for A10. Maybe someone could benchmark a car using it...

TheinsanegamerN - Monday, April 3, 2017 - link

Denver could only beat the A8 in software coded for that kind of CPU (vilv). Any kind of spaghetti code left denver choking on it's own spit, and it was more power hungry to boot.A good first attempt, but nvidia seems to have abandoned it. The fact that nvidia didnt use denver in their own tablet communicated that it was a failure in nvidia's eyes.

tipoo - Monday, April 3, 2017 - link

Parker will use Denver 2 I believe, but paired with stock big ARM cores as well, probably to cover for its weaknesses.fanofanand - Monday, April 3, 2017 - link

That's what I read as well, they will go 2 + 4 with 2 Denver cores and 4 ARM cores (probably A73), letting the ARM cores handle the spaghetti code and the Denver handling the vilv code.tipoo - Monday, April 3, 2017 - link

>The first Denver was comfortably beating Apple A8 at the timeEh, partial truth at best there. Denvers binary translation architecture worked well for straight, predictable code, but as soon as you started getting unpredictable it would choke up. So it suffered a fair bit on simple user facing multitasking for example, or any benchmark with an element of randomness.

Denver 2 with a doubled far cache could have been interesting, I guess we'll see, but Denver didn't exactly light the world on fire.

dud3r1no - Monday, April 3, 2017 - link

I'd be curious if this is a sort of power play.This announcement has tanked the Imagination stock (down like 60% this morning). Acquire IMG cheap. Get all that IP and block other corps from access at the same time?

Ultraman1966 - Monday, April 3, 2017 - link

Anti trust laws says they can't do that.melgross - Monday, April 3, 2017 - link

What anti trust laws? Is Apple the biggest GPU manufacturer around?Eden-K121D - Monday, April 3, 2017 - link

Market Manipulation. I think SEC and FSA won't be pleased