AMD Zen Microarchitecture: Dual Schedulers, Micro-Op Cache and Memory Hierarchy Revealed

by Ian Cutress on August 18, 2016 9:00 AM ESTLow Power, FinFET and Clock Gating

When AMD launched Carrizo and Bristol Ridge for notebooks, one of the big stories was how AMD had implemented a number of techniques to improve power consumption and subsequently increase efficiency. A number of those lessons have come through with Zen, as well as a few new aspects in play due to the lithography.

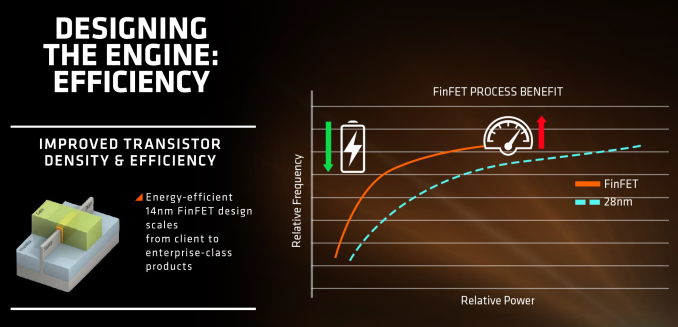

First up is the FinFET effect. Regular readers of AnandTech and those that follow the industry will already be bored to death with FinFET, but the design allows for a lower power version of a transistor at a given frequency. Now of course everyone using FinFET can have a different implementation which gives specific power/performance characteristics, but Zen on the 14nm FinFET process at Global Foundries is already a known quantity with AMD’s Polaris GPUs which are built similarly. The combination of FinFET with the fact that AMD confirmed that they will be using the density-optimised version of 14nm FinFET (which will allow for smaller die sizes and more reasonable efficiency points) also contributes to a shift of either higher performance at the same power or the same performance at lower power.

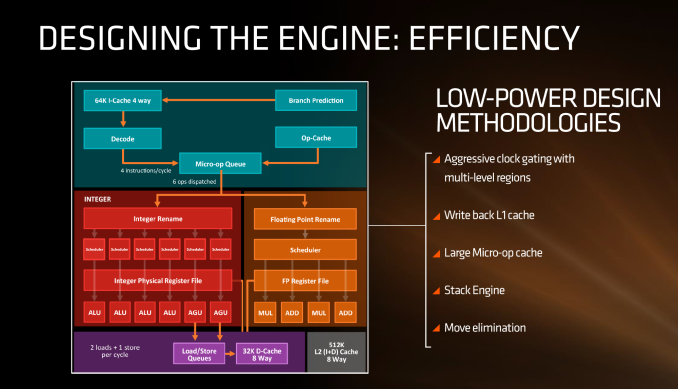

AMD stated in the brief that power consumption and efficiency was constantly drilled into the engineers, and as explained in previous briefings, there ends up being a tradeoff between performance and efficiency about what can be done for a number of elements of the core (e.g. 1% performance might cost 2% efficiency). For Zen, the micro-op cache will save power by not having to go further out to get instruction data, improved prefetch and a couple of other features such as move elimination will also reduce the work, but AMD also states that cores will be aggressively clock gated to improve efficiency.

We saw with AMD’s 7th Gen APUs that power gating was also a target with that design, especially when remaining at the best efficiency point (given specific performance) is usually the best policy. The way the diagram above is laid out would seem to suggest that different parts of the core could independently be clock gated depending on use (e.g. decode vs FP ports), although we were not able to confirm if this is the case. It also relies on having very quick (1-2 cycle) clock gating implementations, and note that clock gating is different to power-gating, which is harder to implement.

216 Comments

View All Comments

JoeyJoJo123 - Thursday, August 18, 2016 - link

The ignorance... It hurts...Original x86 (32-bit) was Intel-AMD developed.

AMD then developed x86-64, or x86 64-bit, and then Intel continues to license it to this day.

There's no copying here. Someone did it first, then others are licensing that IP from them.

See: https://en.wikipedia.org/wiki/X86-64

>x86-64 (also known as x64, x86_64 and AMD64) is the 64-bit version of the x86 instruction set.

>The original specification, created by AMD and released in 2000, has been implemented by AMD, Intel and VIA.

That's why sometimes you might see driver versions labeled AMD64, and you might be puzzled as to why despite being on a Intel 64-bit CPU that the 64-bit driver you downloaded states AMD64 in the name. It's because it was an AMD-first technology, but it's usable on any x86-64bit processor.

Bateluer - Thursday, August 18, 2016 - link

Intel simply paid for the license to copy the technology AMD designed. They still copied it, just legally paid for the right to do so.Klimax - Saturday, August 20, 2016 - link

Actually, not exactly correct. Intel was forced by Microsoft to adopt AMD's solution, despite Intel having parallel own implementation which was different. And Intel's version is still bit different from AMD's. (Some instructions are different between implementations, mostly relevant only to OS)xenol - Thursday, August 18, 2016 - link

IBM made the dual-core on a single die design.ExarKun333 - Thursday, August 18, 2016 - link

In many ways, Intel's 64-bit was superior to AMD's, but x86-64 was more backward compatible. I can see it both ways....different solutions to the same problem. Both companies have pushed each other...TheMightyRat - Thursday, August 18, 2016 - link

How is IA64 superior to AMD64?AMD64 can run 32-bit software without performance hit and still run 64-bit software comparatively equally to Intel counterpart.

IA64 Itanium runs 64-bit software much slower than a Pentium 4 64-bit at the same clock and has a massive performance hit in 32-bit emulation (1/3 as fast). Aren't both of them based on Netburst?

EMT64 only has more codes than AMD64 as it also implement both AMD64 and IA64, which is no longer used in modern server software anymore.

Klimax - Saturday, August 20, 2016 - link

He was talking about Intel's x64 which was backup plan in case Itanium fails.Myrandex - Thursday, August 25, 2016 - link

I don't think Itaniums were Netburst in architecture, it seemed to be a totally different architecture.Gigaplex - Thursday, August 18, 2016 - link

Itanium was novel but turned out to be a poor performer. It relied too much on good compilers optimising the instruction order.KPOM - Friday, August 19, 2016 - link

Wasn't Itanium based on "Very Long Instruction Word" architecture? Hence the long pipelines and reliance on clock speed? The Pentium M from Intel Israel righted Intel's ship and allowed them to take leadership of the x86 architecture back from AMD.