The Intel Xeon E5 v4 Review: Testing Broadwell-EP With Demanding Server Workloads

by Johan De Gelas on March 31, 2016 12:30 PM EST- Posted in

- CPUs

- Intel

- Xeon

- Enterprise

- Enterprise CPUs

- Broadwell

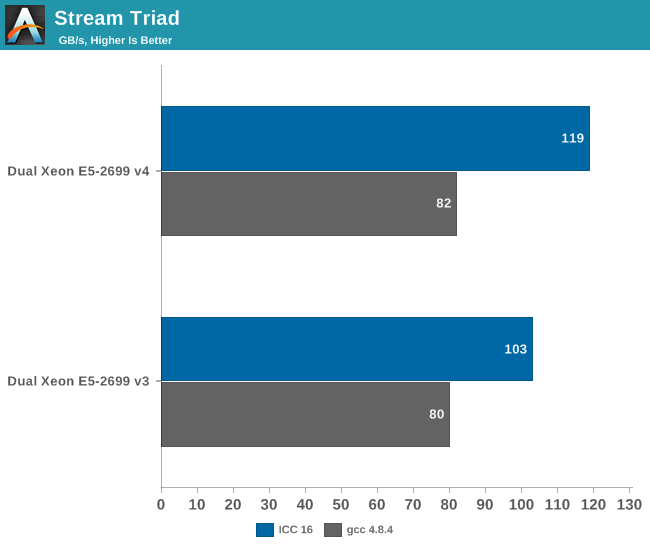

Memory Subsystem: Bandwidth

For this review we completely overhauled our testing of John McCalpin's Stream bandwidth benchmark. We compiled the stream 5.10 source code with the Intel compiler for linux version 16 or gcc 4.8.4, both 64 bit. The following compiler switches were used on icc:

-fast -openmp -parallel

The results are expressed in GB per second. The following compiler switches were used on gcc:

-O3 –fopenmp –static

Stream allows us to estimate the maximum performance increase that DDR-2400 (Xeon E5 v4) can offer over DDR-2133 (Xeon E5 v3).

The Xeon E5 v4 with DDR4-2400 delivers about 15% higher performance then the v3 when we compile Stream with icc. To put this into perspective: DDR-4 @ 1600 delivered 80 GB/s.

The difference between DDR-4 2400 and DDR-4 2133 is negligible with gcc.

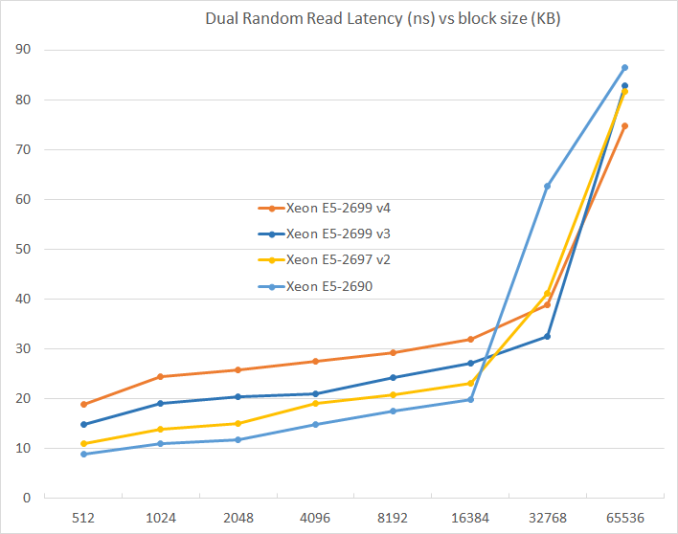

Memory Subsystem: Latency

To measure latency, we use the open source TinyMemBench benchmark. The source was compiled for x86 with gcc 4.8.2 and optimization was set to "-O2". The measurement is described well by the manual of TinyMemBench:

Average time is measured for random memory accesses in the buffers of different sizes. The larger the buffer, the more significant the relative contributions of TLB, L1/L2 cache misses, and DRAM accesses become. All the numbers represent extra time, which needs to be added to L1 cache latency (4 cycles).

We tested with dual random read, as we wanted to see how the memory system coped with multiple read requests.

The larger the L3 caches get, the higher the latency. Latency has almost doubled from the Xeon E5 v1 to the Xeon E5 v4 while capacity has almost tripled (55 MB vs 20 MB). Still, this will result in a small performance hit in many non-virtualized applications that do no need such a large L3.

112 Comments

View All Comments

patrickjp93 - Friday, April 1, 2016 - link

Knight's Landing: 730 mm^2, also on the 14nm platformextide - Friday, April 1, 2016 - link

Is it really that big..? Wow, I knew it was big, but didn't know it was that big. Got a source on that?Kevin G - Friday, April 8, 2016 - link

I'll second a link for a source. I knew it'd be big but that big?extide - Friday, April 1, 2016 - link

I know you meant Reticle, but that was a pretty funny typo, heh.Kevin G - Friday, April 8, 2016 - link

Autocorrect has gotten the best of me yet again.extide - Friday, April 1, 2016 - link

And, I know how big GM200 and Fiji are, but I am talking about big GPU's on 14/16nm. All signs are currently pointing to <300mm^2 for the first round of 14/16nm GPU's.lorribot - Thursday, March 31, 2016 - link

Given the way Microsoft and others are now licensing by the core and in large non splitable packages (Windows 2016 Datacenter is in blocks of 16 cores, a dual socket server with 44 cores would need 48 core licences) the increasing core count has limited appeal over small numbers of faster cores when looking at virtualised environments.Those still in the physical world will still have to pay per core but may have to buy 4 std Windows licenses.

when it comes to doing your testing, it should reflect these costs and compare total bang per buck when dealing with performance.

Red Hat still licences per socket but don't be surprised if they go per core too.

JohanAnandtech - Friday, April 1, 2016 - link

Back in 2008, I had a sales person explaining the license models of Microsoft to me in our lab. From that point on, we have invested most of our time and resources in linux server software. :-Dextide - Friday, April 1, 2016 - link

Enterprise linux isn't free, either ya knowrahvin - Friday, April 1, 2016 - link

Support isn't free on the FOSS side but the software is. Redhat is never going to charge more per "cores" for support, that's ridiculous and would result in rivals stealing their support contracts. If licensing costs are that bad that you are dumping hardware you really should be looking at moving services to Linux and Visualizing the windows servers so you can limit the core count and provide more horsepower.Anyone putting Microsoft on bare hardware these days is nuts. Although the consolation is that they get to pay MS's exorbitant tax on software. Linux should be the core component of any IT services and virtualized servers where you need proprietary server software.